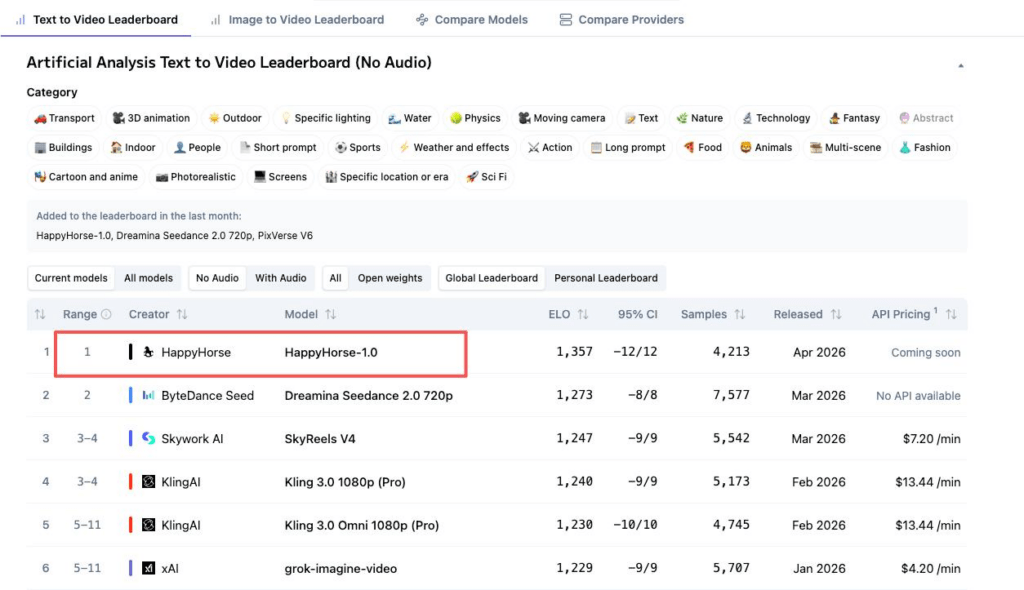

Last Thursday evening I was prepping a batch of product video assets for a client — the usual image-to-video pipeline stuff — and a friend pinged me: “Camille, have you seen HappyHorse?” I hadn’t. I opened the Artificial Analysis Video Arena leaderboard, and there it was — a name I’d never seen, sitting at #1 in both text-to-video and image-to-video. No team page. No brand I recognized. Just the words “fully open source” in bold on the landing page.

If you’re a developer evaluating AI video models for a product pipeline, or someone on a small team trying to figure out whether this thing is worth reorganizing your workflow around — you probably had the same reaction I did. Curiosity, then one click, then: the GitHub link said “coming soon.” The Hugging Face link went nowhere.

That little gap between the claim and the download button is exactly what this piece is about. Not a hype summary. Just — what can we actually confirm right now?

What “Open Source” Means for an AI Video Model

The Open Source Initiative’s AI Definition (OSAID 1.0) sets a clear standard: an open-source AI system should let anyone use, study, modify, and share it — with access to the components that make modification meaningful.

Code, Weights, License — What Needs to Be Public

For a video model, that means downloadable model weights (without them, you can’t run the model locally, fine-tune, or verify performance claims), inference code, a license file spelling out usage rights, and a model card documenting architecture and limitations.

“Open weights” is a step below — you can run and fine-tune, but training data stays private. “Open access” — a demo or API with nothing downloadable — is something else entirely. These distinctions matter when you’re deciding whether to build a workflow around a model.

What HappyHorse-Related Sites Claim

Everything Listed as “Released”

The language on happyhorse-ai.com is confident: base model, distilled model, super-resolution module, and inference code are described as released, with commercial usage rights included. A GitHub README describes the architecture in detail — but the same README includes a warning: “The model weights and inference code are marked ‘coming soon.'”

Documentation says released. Download links say not yet. That’s the core issue.

15B Parameters, DMD-2 Distillation, Commercial Rights

The claimed specs are specific: 15 billion parameters, a unified 40-layer self-attention Transformer, DMD-2 distillation to 8 denoising steps, joint audio-video generation, and roughly 38 seconds for 1080p on a single H100.

These specs closely match daVinci-MagiHuman, an open-source project from Sand.ai and SII-GAIR Lab with actual downloadable weights on Hugging Face under Apache 2.0. A 36Kr investigation found near-identical benchmarks and site structures between the two. Community consensus — unconfirmed — is that HappyHorse is an optimized iteration of daVinci-MagiHuman, submitted to the arena to test user preference before commercialization.

But community consensus isn’t official confirmation. And HappyHorse-1.0, under that name, has released nothing downloadable.

What We Could Actually Verify

GitHub and Hugging Face Link Status

As of April 10, 2026, theGitHub repo is readable but contains no weights, no inference code, and no license file. The Hugging Face profile shows no public models — the path happy-horse/happyhorse-1.0 returned a 401 error as recently as April 9, per independent verification.

Has Any Third Party Deployed It Locally?

No. Not under the HappyHorse name. No blog post, no Discord thread, no Replicate or fal.ai integration. These platforms depend on publicly available weights, and those don’t exist yet.

If the underlying model is daVinci-MagiHuman, that one has been deployed by community testers — but it requires H100-class hardware, and early reports note it’s strongest in single-character portrait scenarios with quality gaps in multi-subject scenes.

Why This Matters for Developers and API Teams

HappyHorse-1.0 holds Elo 1387 in T2V and 1414 in I2V (both no-audio) on the Artificial Analysis Video Arena — #1 in both categories. Those scores are from blind user votes, and the quality signal is real. But knowing a model wins comparisons doesn’t help if you can’t call it.

Self-Hosting and Integration Need Downloadable Artifacts

If your team evaluates AI video models for production — building a generation feature, running batch pipelines — you need weights, inference code, documentation, and a license file. HappyHorse has none of these today.

For comparison, the LTX-2 series from Lightricks leads verified open-weights models on the same leaderboard (LTX-2 Pro at Elo 1131 T2V, 1193 I2V). A real step behind HappyHorse’s scores, but with actual weights on Hugging Face, Apache 2.0 licensing, and active community deployment. That’s the difference between a model you can evaluate and one you can read about.

No Published License Means No Commercial Clarity

The sites mention commercial rights, but there’s no license file in the repo. Until one is published, those claims are unverifiable.

What to Watch for Next

Stealth-drop-then-release has become a pattern this year — Pony Alpha appeared anonymously and turned out to be Z.ai’s GLM-5. HappyHorse fits the template. It might materialize next week. It might not.

Public repo with model card — A GitHub or Hugging Face release with actual weights and a license file. That’s the clearest signal.

Independent reproduction — Someone outside the creators downloading weights, running inference, publishing results. That’s when specs become information instead of marketing.

Team identity disclosure — Some sites attribute it to the Future Life Lab team at Taotian Group (Alibaba) led by Zhang Di; other analysis points to Sand.ai. Neither is officially confirmed through a primary source.

One more thing worth noting — regardless of which model powers your video pipeline, input quality sets the ceiling. Every I2V model amplifies whatever’s in the source asset. Dirty edges, leftover halos, compression artifacts — they all get baked into every frame. Clean input first, generate second. That order never changes.

Done. No overworking it.

FAQ

Can I commercially use HappyHorse-1.0 outputs? No published license exists under the HappyHorse name. If the underlying model is daVinci-MagiHuman, its Apache 2.0 license permits commercial use — but confirm with your own legal review.

How does HappyHorse compare to open-source models like LTX? Higher Elo scores, but LTX-2 Pro has actual weights, documented requirements, and community support. Quality signal vs. practical accessibility point in different directions right now.

If it becomes truly open source, could I integrate it via API? Once weights are public, third-party platforms like Replicate and fal.ai typically follow within days. The weights are the bottleneck.

See you next time — may your assets always stay clean.

Previous posts:

HappyHorse-1.0 vs Seedance 2.0: Rankings and Limits

What Is HappyHorse-1.0? The Mystery #1 AI Video Model

Seedance 2.0 Audio Guide: Dialogue, SFX, BGM, and Lip Sync Tips

AI Image to Video Online: Turn Any Photo Into a Motion Clip (Free)

Seedance 2.0 Image to Video: Turn One Photo Into a Consistent 16s Clip