If you’ve been anywhere near AI video Twitter this past week, you’ve probably seen the name HappyHorse scroll past at least three times before your morning coffee. I watched it happen in real time—opened my feed on April 8, sat down with a matcha, and the timeline was just… horses. Mystery horses. Everyone asking the same question.

Here’s why it matters if you make product videos or social content for a living: a model nobody had heard of walked onto the most watched AI video leaderboard and sat down in first place—without a team name, a press release, or a single demo reel. Understanding how this ranking works is more useful than the ranking itself. That’s what this piece is about.

What Happened — HappyHorse-1.0 on Artificial Analysis

April 7, 2026: A Pseudonymous Model Appears

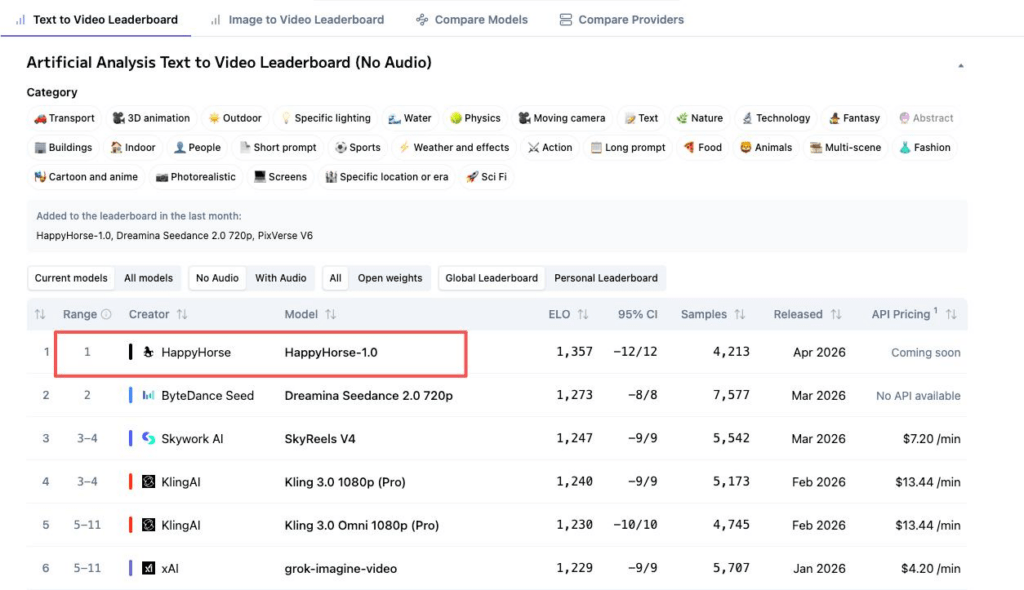

Around April 7–8, Artificial Analysis added a new entry to its Video Arena under the name HappyHorse-1.0. No company logo. No team bio. Just a model, submitted pseudonymously, competing blind against Seedance 2.0, Kling 3.0, and everything else on the board. Within hours, it climbed to #1 in both text-to-video and image-to-video.

Brent Lynch posted on X—”WHO IS HAPPYHORSE?“—and that captured the mood. By April 10, Artificial Analysis confirmed the model came from Alibaba-ATH, Alibaba’s AI innovation unit. Mystery partially solved. But the ranking still needed explaining.

Current Positions Across Four Leaderboards

As of mid-April 2026 (scores shift daily—always check the live board):

| Category | HappyHorse-1.0 | #2 Model | Gap |

| T2V, no audio | Elo 1,387 (#1) | Seedance 2.0 — 1,274 | 113 |

| I2V, no audio | Elo 1,415 (#1) | Seedance 2.0 — 1,358 | 57 |

| T2V, with audio | Elo 1,236 (#1) | Seedance 2.0 — 1,224 | 12 |

| I2V, with audio | Elo 1,163 (#2) | Seedance 2.0 — 1,165 | −2 |

The no-audio categories show a significant gap. With audio, it’s essentially a coin flip. That pattern is worth noticing.

How the Artificial Analysis Video Arena Works

Blind User Voting, Not Automated Benchmarks

The Artificial Analysis Video Arena is not a benchmark where a lab submits its own test scores. You’re shown two videos generated from the same prompt, and you pick which one you prefer. No labels. No brand names. Just two clips and your gut reaction. The ranking comes entirely from real people making real choices.

Elo Mechanics and What the Numbers Mean

The system uses Elo ratings—the same math behind chess rankings, originally designed by Arpad Elo in the 1960s. When a user picks Video A over Video B, the winner gains points, the loser drops some. Upsets cause bigger swings.

Practically: a 100-point gap translates to roughly a 64% expected win rate. HappyHorse’s 113-point lead over Seedance in T2V without audio means users choose its output a solid majority of the time. The 2-point gap in I2V with audio? Statistical noise.

Why a New Model Can Reach #1 Quickly — and Why That Doesn’t Mean It Stays

Elo doesn’t care about brand recognition. A well-funded company can’t buy a better result. But early scores with few votes are volatile. HappyHorse now has 13,500+ samples in T2V no-audio with a ±7 confidence interval—that’s solid. But early-day rankings, when vote counts were in the hundreds, were less reliable by design.

What #1 in Image-to-Video (No Audio) Signals

Users Prefer Its Reference Following in Blind Tests

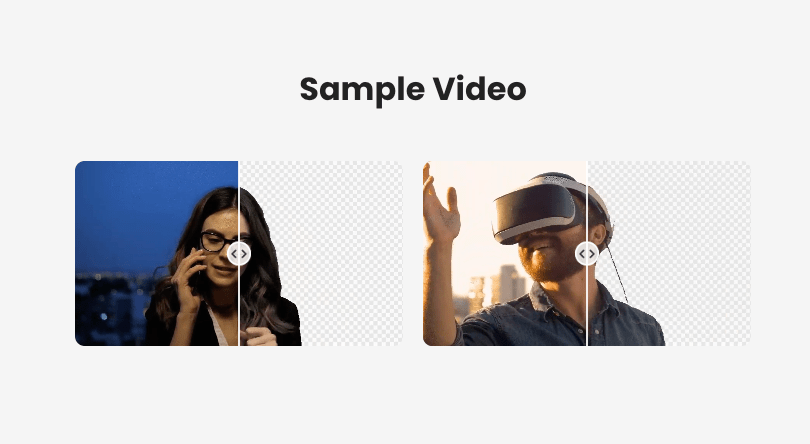

The I2V no-audio category is the one I keep coming back to. You give the model a reference image—a product photo, a portrait—and ask it to animate that into video. The critical question: does the output actually look like the input?

HappyHorse leads at Elo 1,415, 57 points ahead of Seedance 2.0. For anyone making product videos from still images, this is the category that maps most directly to the real workflow.

Reference Following Depends on Input Asset Quality

But the model can only follow what you give it. I’ve spent enough late nights feeding AI video tools mediocre product photos and wondering why the output looked off. Fuzzy edges, leftover background halos, compression artifacts—all of that carries through.

This is where something I’ve talked about before becomes directly relevant: clean background removal and edge cleanup quietly determine whether a generated video looks professional or amateur. No halo, no fringing, sufficient resolution. I check my assets before I rewrite the prompt now. It’s faster and it works more often.

Limitations of Leaderboard Rankings

Elo Reflects Preference, Not Objective Quality

It’s tempting to see #1 and conclude “best model, period.” Elo measures which output users preferred in blind comparison—that’s valuable, but it’s still preference. There’s a known dynamic where users favor certain characteristics (higher contrast, richer color, smoother motion) that may not suit every production context. A more saturated video wins a blind vote but might look out of place on a product page needing neutral visuals.

Rankings Don’t Tell You About Pricing or Access

As of mid-April 2026, HappyHorse’s API pricing is listed as “coming soon.” The official team announced via X that API access launches April 30, 2026—and warned that multiple fake websites have already appeared. So the #1 model on the leaderboard is also a model you can’t currently use in production.

What This Means for Content Teams

Leaderboard Position Is One Signal, Not the Whole Decision

The actually usable models at positions 3–5 in T2V—SkyReels V4 (Elo 1,244), Kling 3.0 (1,243), Kling 3.0 Omni (1,229)—are separated by about 15 points. Practically a tie, and all three have public APIs with documented pricing. “Best on the leaderboard” and “best for my workflow” are often different things.

Asset Prep Is the Part You Can Control

Models rotate. Leaderboards shift. But the quality of your input assets consistently moves the needle across every model and every ranking reshuffle. Getting background removal and edge quality right before sending anything into an I2V tool is the highest-leverage step. Clean edges, no halos, good resolution. It sounds boring. It is boring. But it’s the gap between output you ship and output that makes you start over.

FAQ

How often do Artificial Analysis Elo scores update? Continuously. Every vote feeds into the calculation in real time. The numbers in this article have an expiration date—check the board directly.

Is HappyHorse-1.0 available to use directly? Not via public API as of mid-April 2026. API access is expected April 30. Be cautious of fake sites claiming to offer early access.

Does a higher Elo mean better results for product video? Not necessarily. Elo reflects general user preference, which may not match your specific needs—neutral color, accurate proportions, clean backgrounds. A lower-Elo model whose strengths match your content type might produce more usable output.

What other models are close to HappyHorse right now? In T2V without audio, positions 3–5 (SkyReels V4, Kling 3.0, Kling 3.0 Omni) are within 15 points of each other. In with-audio categories, Seedance 2.0 and HappyHorse are within 12 points—close enough that rankings could swap with a few hundred more votes.

This one took me longer to write than expected, mostly because I kept rechecking numbers that kept changing while I was writing. That’s kind of the point, though. Leaderboard positions are snapshots, not permanence. The part that stays constant is your assets, your workflow, and whether you’re setting your tools up to succeed before you hit generate.

Alright, go check the live board if you want the freshest numbers. And maybe clean up a few product photos while you’re at it—that part actually helps more than chasing rankings.

Previous posts:

What Is HappyHorse-1.0? The Mystery #1 AI Video Model

Is HappyHorse-1.0 Open Source? What We Can Verify

HappyHorse-1.0 vs Seedance 2.0: Rankings and Limits