It was Tuesday night and I was halfway through prepping a batch of product PNGs for a Seedance 2.0 video test when a friend pinged me: “Have you seen the leaderboard?” I hadn’t. Opened the Artificial Analysis Video Arena, and there it was — a name I’d never seen before, sitting at #1. HappyHorse-1.0. Above Seedance. Above everything.

If you’ve spent the last few weeks building image-to-video workflows around Seedance 2.0 — running product shots, testing reference images, finally getting into a rhythm — that kind of headline lands with a little thud. I know, because I felt it. So I did what I always do when something shakes the ground a bit: I sat down and looked at the actual numbers.

This piece walks through the four leaderboard categories, what the gap between these two models really means, and — the part I care about most — how to keep your work solid no matter which name is on top next month. No verdict on “which one to pick.” Just what I found, honestly.

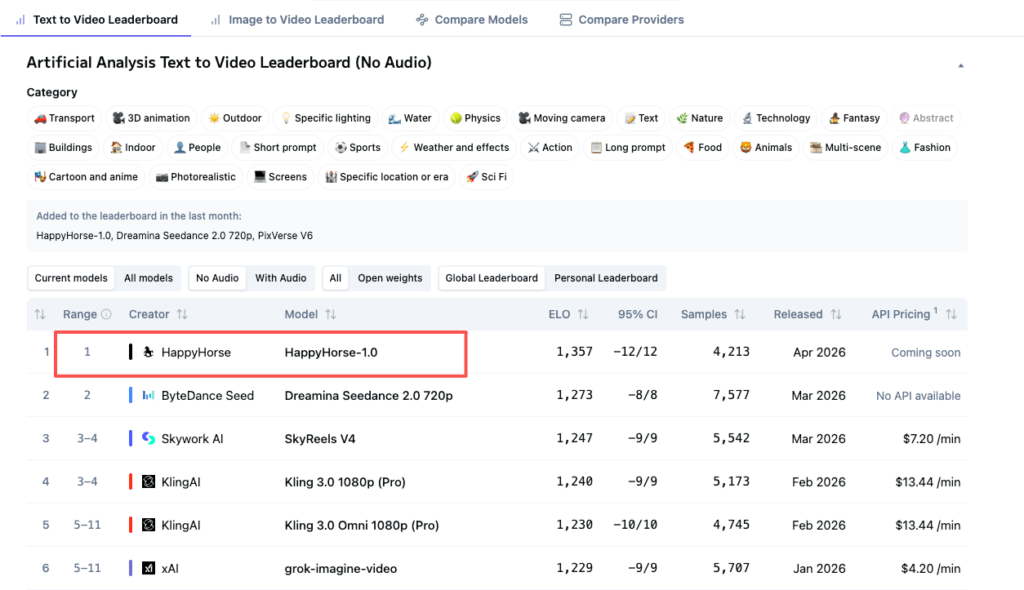

Leaderboard snapshot — April 2026

These numbers come from the live Artificial Analysis leaderboard as of April 10, 2026. They shift daily. Check the live rankings yourself before making decisions.

Text-to-video without audio: HappyHorse #1 (1,374) vs Seedance #2 (1,273)

A ~100-point Elo gap. Users reliably picked HappyHorse outputs in blind matchups. That’s meaningful — not a rounding error. Seedance has over 8,400 vote samples behind its score; HappyHorse has ~7,900 and climbing.

Image-to-video without audio: HappyHorse #1 then gone — Seedance now leads

Earlier reports (April 7–8) showed HappyHorse at Elo ~1,392–1,414 in I2V no-audio, with Seedance at 1,355. As of April 10, HappyHorse no longer appears on the I2V leaderboard’s current-models view. Seedance 2.0 sits at #1 (1,352), with PixVerse V6 close behind (1,343). No official explanation for HappyHorse’s disappearance from this category — multiple community sources note it vanished roughly 72 hours after appearing.

Text-to-video with audio: Seedance #1 (1,223) vs HappyHorse #2 (1,233)

When audio quality enters the evaluation, the gap collapses. Current numbers show HappyHorse slightly ahead, but earlier snapshots had Seedance leading. The real takeaway: neither model has a decisive edge here. A 10-point Elo difference is within normal variance.

Image-to-video with audio: Seedance #1 (1,163) vs HappyHorse #2 (1,162)

One point. That’s noise. Call it a tie.

What the Elo gap tells you — and what it doesn’t

Blind user votes, not automated benchmarks

These rankings come from real users picking between two unlabeled outputs. No self-reporting, no lab benchmarks. That’s why the data matters. But Elo measures one thing — aggregate visual preference — and says nothing about consistency across shots, reference-image handling, or workflow accessibility.

Why new entrants can shift fast and why rankings will keep moving

Seedance’s score is built on 8,400+ samples. HappyHorse’s accumulated fast but is still maturing. New models enter regularly. Treating any snapshot as permanent — including this one — is the mistake I keep watching people make.

What we know about each model’s background

Seedance 2.0 — ByteDance Seed team, documented capabilities, CapCut integration

Seedance 2.0 comes from ByteDance’s Seed research team. Clear developer, documented features, published safety framework. As of late March 2026, it rolled out inside CapCut across select markets (Brazil, Indonesia, Malaysia, Mexico, Philippines, Thailand, Vietnam), with more expanding. Also accessible through Dreamina and ByteDance’s Pippit marketing platform.

What that means practically: concept → generate → edit → caption → export, one environment. For anyone producing content at volume, that integration is worth more than a leaderboard number.

HappyHorse-1.0 — pseudonymous, unverified background, no confirmed official channel

Artificial Analysis describes HappyHorse as “pseudonymous.” Community speculation points to a team formerly from Alibaba’s Taotian Group, led by Zhang Di (ex-VP of Kuaishou, former Kling AI technical lead). A separate theory links it to Sand.ai’s open-source daVinci-MagiHuman project, which shares nearly identical claimed specs. Neither is confirmed.

As of April 10, 2026: GitHub and Hugging Face links on HappyHorse-affiliated sites say “coming soon.” No downloadable weights. No API. Multiple domains describe the model but none have confirmed they run the exact arena model. The blind-voting data is real — but for production purposes, the model doesn’t exist yet.

Early quality signals from community testing

HappyHorse: camera drift, body movement, atmosphere

Community samples suggest strength in natural body motion, fluid camera work, and atmospheric rendering. The official positioning emphasizes human-centric scenarios and facial performance. Impressive in what I’ve seen — but arena samples are curated highlights, not average output. I haven’t been able to test this under controlled conditions because there’s no stable access.

Seedance 2.0: character consistency, multi-shot narrative, reference control

Seedance 2.0’s documented edge is in structured production: character consistency across shots, multi-reference control (character image + camera motion reference + audio in one generation), and tight prompt adherence. For product video workflows where the same item needs to look identical across five clips, that matters more than any single frame looking cinematic.

Why input asset quality matters for both models

Clean cutouts, transparent PNGs, and edge artifacts

Both models are reference-driven. For image-to-video, your input image quality is the biggest factor in output quality. A product shot with halo fringing or leftover background residue will carry those flaws into generated video — except now they’re moving and flickering.

How bad edges cause flicker and shimmer in AI video

The model treats edge artifacts as part of the image and tries to animate them. Across frames, ambiguous edges flicker. In my Seedance testing, roughly 70% of “AI video flicker” in product shots traces back to the input asset, not the model. Same principle applies to any reference-driven model.

The shared bottleneck: reference image prep

Remove the background cleanly. Check for halos on both light and dark backgrounds. Export at the highest resolution your source allows. For batch work, test a small sample first. This step is identical whether you feed the result into Seedance, HappyHorse, Kling, or anything else. Clean assets are model-agnostic — and that’s the safest investment you can make right now.

Which workflow fits which model (decision framework)

Product demos and e-commerce loops

Seedance 2.0. It’s accessible, documented, and has multi-reference control built for consistency. HappyHorse’s visual quality is higher in blind tests, but you can’t build a repeatable workflow around a model you can’t access.

Character-driven or multi-shot narrative

Seedance 2.0’s reference-control architecture gives you documented tools for character consistency across shots. HappyHorse’s positioning sounds promising for human-centric video, but without documentation, it’s speculation.

When you don’t yet know which model you’ll use

Invest in asset prep. Clean cutouts, high-resolution reference images, tested edge quality. Those assets work with every model — today’s and next month’s.

What’s still unknown

HappyHorse’s developer remains unconfirmed. Its disappearance from parts of the leaderboard has no explanation. The promised open-source release (weights, distilled model, inference code) hasn’t materialized. Seedance 2.0’s CapCut rollout hasn’t reached the US or Europe yet, though Dreamina provides international access.

FAQ

Which model is better for product video?

Seedance 2.0 is the practical choice today — accessible, documented, with multi-reference control for consistency. HappyHorse’s blind-test quality is higher, but without stable access, building a repeatable workflow around it isn’t feasible yet.

Can I switch between Seedance and HappyHorse without re-prepping assets?

Yes. A clean transparent PNG with no edge halos works for any reference-driven video model. Prep once, use everywhere.

Will these rankings stay stable?

No. Elo updates with every vote. Always check the live leaderboard rather than trusting any article — including this one.

Does Cutout.Pro work with both models’ input requirements?

Both models need clean transparent PNGs as input. Cutout.Pro’s background removal and batch tools produce exactly that — edge-clean cutouts without halos, exported at source resolution. The assets are model-agnostic.

That’s where things stand. HappyHorse wins the visual quality test. Seedance 2.0 wins the “can I actually use this” test. The honest move: prep your assets like the model doesn’t matter, and don’t rearrange your pipeline around a name until there’s a GitHub link that actually loads.

See you next time.

Previous posts:

What Is HappyHorse-1.0? The Mystery #1 AI Video Model

Seedance 2.0 Audio Guide: Dialogue, SFX, BGM, and Lip Sync Tips

AI Image to Video Online: Turn Any Photo Into a Motion Clip (Free)

Seedance 2.0 Image to Video: Turn One Photo Into a Consistent 16s Clip

How to Use Seedance 2.0 Text to Video: Step-by-Step Guide for Beginners